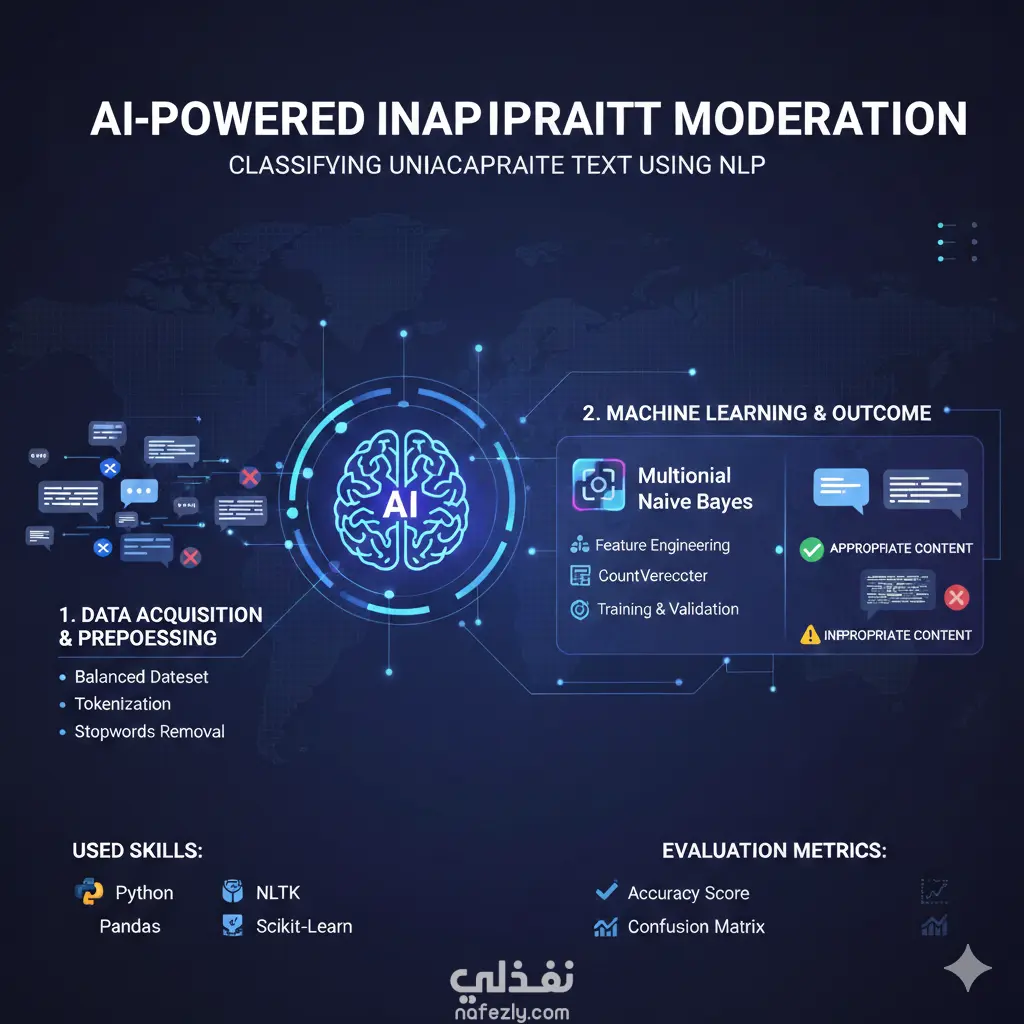

ToxicityShield: Automated NLP Classifier for Social Media Moderation

تفاصيل العمل

ToxicityShield is an end-to-end Natural Language Processing (NLP) pipeline designed to identify and categorize toxic content within social media datasets. Developed using Python and industry-standard machine learning libraries, the project addresses the critical need for automated moderation in digital spaces. The system processes raw tweet data through a rigorous cleaning and normalization phase—utilizing NLTK for tokenization, lemmatization, and stop-word removal—to ensure high-quality input for the model. By implementing a Multinomial Naive Bayes classifier paired with Count Vectorization, the project achieves a high level of predictive accuracy in distinguishing between neutral and harmful text. Technical Highlights Data Pipeline: Engineered a robust preprocessing function that handles noise in text data, including special character removal and case normalization. Model Performance: Achieved a classification accuracy of 91.2% on balanced testing sets. Feature Engineering: Utilized a Bag-of-Words (BoW) approach to transform unstructured text into high-dimensional feature vectors for model training. Evaluation: Validated results through detailed confusion matrices to minimize false negatives in toxicity detection.

مهارات العمل